Troubleshooting Scenario 2 – Role Recycling After Running Fine For 2 Weeks

Continuing from the diagnostic information at Windows Azure PaaS Compute Diagnostics Data, this blog post will describe how to troubleshoot a role that begins recycling after it has been running fine for some period of time. This is a fairly common scenario and the first instinct is to naturally blame Azure/Microsoft (“Hey! This thing was running fine for a long time so it must be a problem with your datacenter!”).

If this is your first exposure to this troubleshooting scenario series I would recommend starting with Windows Azure PaaS Compute Diagnostics Data which will walk through the diagnostic data locations and link to the earlier troubleshooting scenarios which lay out some fundamental troubleshooting techniques.

Symptom

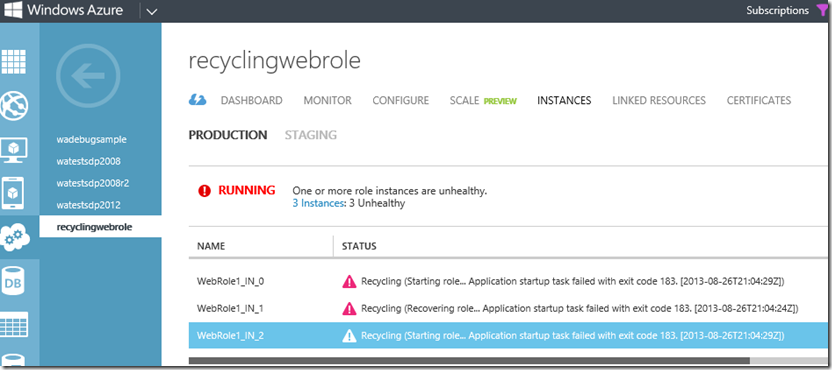

You have deployed a hosted service to Azure and it is working great. A couple weeks go by and you start getting alerts from your monitoring service that your Azure hosted site is down. You go to the portal and you see your instances in a Recycling state (cycling between the Starting, Busy, Recycling, Initializing, etc states).

Immediately assuming it must be a Microsoft problem, because you haven’t changed anything, you check the Service Health Dashboard and see that everything is operating normally. Time to start troubleshooting.

Get the Big Picture

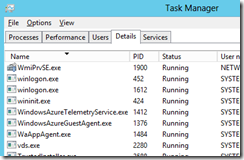

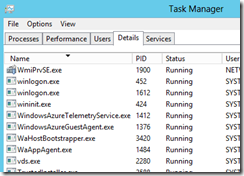

Just like in Scenario 1 we want to RDP onto the Azure VM and get a high level overview of what is happening on the VM. Similar to Scenario 1 we start off only seeing WindowsAzureGuestAgent running:

Then after a few seconds we see WaHostBootstrapper, but unlike Scenario 1 we do not see WaIISHost start, and then a few seconds later WaHostbootstrapper goes away.

As we know from scenario 1, if we only see WaHostBootstrapper then it is probably a startup task that is failing.

Check the Logs

According to Windows Azure PaaS Compute Diagnostics Data, WaHostBootstrapper logs are in C:\Resources\WaHostBootstrapper.log. A new host bootstrapper log is written every time the WaHostBootstrapper process is started and the old one is saved as WaHostBootstrapper.log.old.<index> (up to a maximum of 15 old logs), and looking in the C:\Resources folder I can see 16 log files which indicates several restarts of WaHostBootstrapper (ie. a recycling role). The current log file (WaHostBootstrapper.log) will probably only be partially written because it is the host bootstrapper that is failing and exiting, so I am more interested in one of the older log files which will show the last log entry written before the process exited. Opening WaHostBootstrapper.log.old.001 we see the following:

WaHostBootstrapper log:

These first several entries are standard and denote the host bootstrapper startup:

[00002068:00002012, 2013/08/26, 21:21:03.620, ERROR] <- WapXmlReadRoleModel=0x1

[00002068:00002012, 2013/08/26, 21:21:03.620, ERROR] <- WapXmlReadContainerId=0x1

[00002068:00002012, 2013/08/26, 21:21:03.620, ERROR] <- WapGetVirtualAccountName=0x1

[00002068:00002012, 2013/08/26, 21:21:03.620, ERROR] <- WapGetAppCmdPath=0x1

[00002068:00002012, 2013/08/26, 21:21:03.620, ERROR] <- WapSetDefaultEnvironment=0x1

[00002068:00002012, 2013/08/26, 21:21:03.620, ERROR] <- WapGetAppHostConfigPath=0x1

[00002068:00002012, 2013/08/26, 21:21:03.620, ERROR] <- GetDebugger=0x1

[00002068:00002012, 2013/08/26, 21:21:03.620, ERROR] <- GetStartupTaskDebugger=0x1

[00002068:00002012, 2013/08/26, 21:21:03.620, ERROR] <- WapGetEnvironmentVariable=0x800700cb

[00002068:00002012, 2013/08/26, 21:21:03.620, ERROR] <- WapGetEnvironmentVariable=0x800700cb

[00002068:00002012, 2013/08/26, 21:21:03.620, ERROR] <- WapGetEnvironmentVariable=0x800700cb

[00002068:00002012, 2013/08/26, 21:21:03.620, ERROR] <- WapGetEnvironmentVariable=0x800700cb

You will then see several startup tasks executing for IISConfigurator (if running a web role), modules defined in <Import> elements in the csdef file, and startup tasks. Note that the 0x800700cb returns from WapGetEnvironmentVariable are normal and can be ignored*. Note that the startup tasks with “type=0” are simple types which block host bootstrapper progression until they exit, and startup tasks with “type=2” are background and host bootstrapper will continue execution while they run.

[00002068:00002012, 2013/08/26, 21:21:03.620, INFO ] Executing Startup Task type=0 rolemodule=IISConfigurator cmd="E:\base\x64\IISConfigurator.exe"

[00002068:00002012, 2013/08/26, 21:21:03.620, INFO ] Executing "E:\base\x64\IISConfigurator.exe" .

[00002068:00002012, 2013/08/26, 21:21:03.683, INFO ] Program "E:\base\x64\IISConfigurator.exe" exited with 0. Working Directory = E:\base\x64

[00002068:00002012, 2013/08/26, 21:21:03.683, ERROR] <- WapGetEnvironmentVariable=0x800700cb

[00002068:00002012, 2013/08/26, 21:21:03.683, ERROR] <- WapGetEnvironmentVariable=0x800700cb

[00002068:00002012, 2013/08/26, 21:21:03.683, INFO ] Executing Startup Task type=2 rolemodule=Diagnostics cmd="E:\plugins\Diagnostics\DiagnosticsAgent.exe"

[00002068:00002012, 2013/08/26, 21:21:03.683, INFO ] Executing "E:\plugins\Diagnostics\DiagnosticsAgent.exe" .

[00002068:00002012, 2013/08/26, 21:21:03.745, ERROR] <- WapGetEnvironmentVariable=0x800700cb

[00002068:00002012, 2013/08/26, 21:21:03.745, ERROR] <- WapGetEnvironmentVariable=0x800700cb

[00002068:00002012, 2013/08/26, 21:21:03.745, INFO ] Executing Startup Task type=0 rolemodule=Diagnostics cmd="E:\plugins\Diagnostics\DiagnosticsAgent.exe" /blockStartup

[00002068:00002012, 2013/08/26, 21:21:03.745, INFO ] Executing "E:\plugins\Diagnostics\DiagnosticsAgent.exe" /blockStartup.

[00002068:00004008, 2013/08/26, 21:21:04.276, INFO ] Registering client with PID 3888.

[00002068:00004008, 2013/08/26, 21:21:04.276, INFO ] Client DiagnosticsAgent.exe (3888) registered.

[00002068:00003276, 2013/08/26, 21:21:04.620, INFO ] Registering client with PID 4076.

[00002068:00003276, 2013/08/26, 21:21:04.620, INFO ] Client DiagnosticsAgent.exe (4076) registered.

[00002068:00002012, 2013/08/26, 21:21:09.825, INFO ] Program "E:\plugins\Diagnostics\DiagnosticsAgent.exe" /blockStartup exited with 0. Working Directory = E:\plugins\Diagnostics

[00002068:00002012, 2013/08/26, 21:21:09.825, ERROR] <- WapGetEnvironmentVariable=0x800700cb

[00002068:00002012, 2013/08/26, 21:21:09.825, ERROR] <- WapGetEnvironmentVariable=0x800700cb

[00002068:00002012, 2013/08/26, 21:21:09.825, INFO ] Executing Startup Task type=2 rolemodule=RemoteAccess cmd="E:\plugins\RemoteAccess\RemoteAccessAgent.exe"

[00002068:00002012, 2013/08/26, 21:21:09.825, INFO ] Executing "E:\plugins\RemoteAccess\RemoteAccessAgent.exe" .

[00002068:00002012, 2013/08/26, 21:21:09.825, ERROR] <- WapGetEnvironmentVariable=0x800700cb

[00002068:00002012, 2013/08/26, 21:21:09.825, ERROR] <- WapGetEnvironmentVariable=0x800700cb

[00002068:00002012, 2013/08/26, 21:21:09.825, INFO ] Executing Startup Task type=0 rolemodule=RemoteAccess cmd="E:\plugins\RemoteAccess\RemoteAccessAgent.exe" /blockStartup

[00002068:00002012, 2013/08/26, 21:21:09.825, INFO ] Executing "E:\plugins\RemoteAccess\RemoteAccessAgent.exe" /blockStartup.

[00002068:00003308, 2013/08/26, 21:21:10.185, INFO ] Registering client with PID 3448.

[00002068:00003356, 2013/08/26, 21:21:10.200, INFO ] Registering client with PID 908.

[00002068:00003356, 2013/08/26, 21:21:10.200, INFO ] Client RemoteAccessAgent.exe (908) registered.

[00002068:00003308, 2013/08/26, 21:21:10.232, INFO ] Client RemoteAccessAgent.exe (3448) registered.

[00002068:00002012, 2013/08/26, 21:21:10.575, INFO ] Program "E:\plugins\RemoteAccess\RemoteAccessAgent.exe" /blockStartup exited with 0. Working Directory = E:\plugins\RemoteAccess

[00002068:00002012, 2013/08/26, 21:21:11.122, ERROR] <- WapGetEnvironmentVariable=0x800700cb

[00002068:00002012, 2013/08/26, 21:21:11.122, ERROR] <- WapGetEnvironmentVariable=0x800700cb

[00002068:00002012, 2013/08/26, 21:21:11.122, ERROR] <- WapGetEnvironmentVariable=0x800700cb

[00002068:00002012, 2013/08/26, 21:21:11.122, INFO ] Executing Startup Task type=0 rolemodule=RemoteForwarder cmd="E:\plugins\RemoteForwarder\install.cmd"

[00002068:00002012, 2013/08/26, 21:21:11.138, INFO ] Executing "E:\plugins\RemoteForwarder\install.cmd" .

[00002068:00002012, 2013/08/26, 21:21:11.388, INFO ] Program "E:\plugins\RemoteForwarder\install.cmd" exited with 0. Working Directory = E:\plugins\RemoteForwarder

And finally we see the last startup task executing before the host bootstrapper process exits:

[00002068:00002012, 2013/08/26, 21:21:11.388, INFO ] Executing Startup Task type=0 rolemodule=(null) cmd="E:\approot\bin\StartupTasks\Startup.cmd"

[00002068:00002012, 2013/08/26, 21:21:11.388, INFO ] Executing "E:\approot\bin\StartupTasks\Startup.cmd" .

[00002068:00002012, 2013/08/26, 21:21:11.497, INFO ] Program "E:\approot\bin\StartupTasks\Startup.cmd" exited with 183. Working Directory = E:\approot\bin

[00002068:00002012, 2013/08/26, 21:21:11.497, ERROR] <- WaitForProcess=0x80004005

[00002068:00002012, 2013/08/26, 21:21:11.497, ERROR] <- ExecuteProcessAndWait=0x80004005

[00002068:00002012, 2013/08/26, 21:21:11.497, ERROR] <- WapDoStartup=0x80004005

[00002068:00002012, 2013/08/26, 21:21:11.497, ERROR] <- DoStartup=0x80004005

[00002068:00002012, 2013/08/26, 21:21:11.497, ERROR] <- wmain=0x80004005

The interesting thing to note is that the last startup task to run is E:\approot\bin\StartupTasks\Startup.cmd, which is the custom startup task that we have deployed with this service. WaHostBootstrapper expects all startup tasks to exit with a success (a 0 return code), and considers any non-zero return code to be a failure which causes the host bootstrapper to exit and recycle the role. We can see in this case that our Startup.cmd startup task is exiting with a 183 exit code which WaHostBootstrapper sees as a fatal error. Now that we know which process is failing it is time to go find out why.

*Additional WaHostBootstrapper messages which can be ignored:

ERROR] Failed to connect to client RemoteAccessAgent.exe (3120). {Note that RemoteAccessAgent could be any startup task, and 3120 could be any PID}

ERROR] <- CRuntimeClient::OnRoleStatusCallback(0x00000035CFE86E00) =0x800706ba

Check the startup task

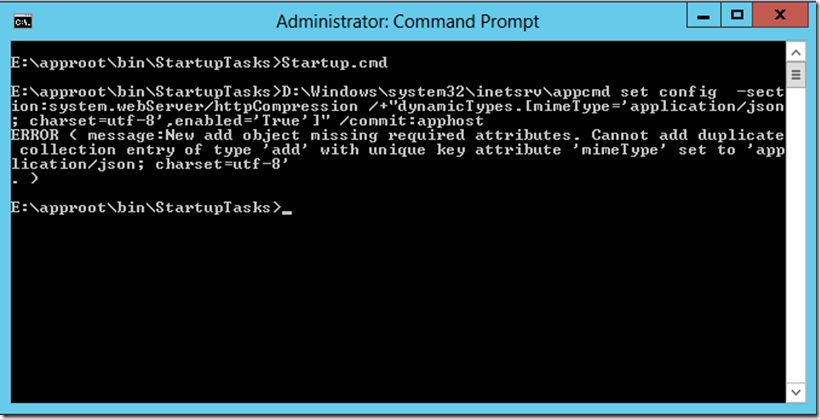

First lets browse to the startup task and open it in notepad to see what it is doing:

Startup.cmd

%windir%\system32\inetsrv\appcmd set config -section:system.webServer/httpCompression /+"dynamicTypes.[mimeType='application/json; charset=utf-8',enabled='True']" /commit:apphost

This startup task is pretty simple (just one line) so we know which command is failing, and at this point we could do some internet searches for ‘appcmd error 183’ or something like that. But a better way to troubleshoot this, especially if the startup script is more complicated, is to just run it manually from a command prompt and see if we get a better error message.

We can clearly see that appcmd is throwing an error because we are trying to add a duplicate collection entry. The simple way to fix this is to add an ‘exit /b 0’ to the end of our startup script as per the documentation (and all over the web).

Why Did This Just Now Start Failing?

We found the root cause and fixed the issue, but the next logical question is why did this problem just now start happening? My site monitoring alerts tell me that I started getting failures at 08/26/2013 20:48:30 UTC so lets see what was going on at that time:

From the Role Architecture diagram we know that the guest agent is responsible for running everything in the guest OS, so starting there we look for logs in C:\Logs.

We see that prior to my monitoring alerts the instance is in the Ready state, which means that according to the Azure guest agent this instance is working fine and in load balancer rotation.

[00000010] [08/26/2013 20:47:57.45] [INFO] Role e85c82ee2e5b48f7ac6f88a8848dba16.WebRole1_IN_0 has current state Started, desired state Started, and goal state execution status UpdateSucceeded.

[00000009] [08/26/2013 20:47:57.72] [HEART] WindowsAzureGuestAgent Heartbeat.

[00000009] [08/26/2013 20:47:57.72] [INFO] Role e85c82ee2e5b48f7ac6f88a8848dba16.WebRole1_IN_0 is reporting state Ready.

[00000010] [08/26/2013 20:48:02.45] [INFO] Role e85c82ee2e5b48f7ac6f88a8848dba16.WebRole1_IN_0 has current state Started, desired state Started, and goal state execution status UpdateSucceeded.

[00000009] [08/26/2013 20:48:02.74] [HEART] WindowsAzureGuestAgent Heartbeat.

[00000009] [08/26/2013 20:48:02.74] [INFO] Role e85c82ee2e5b48f7ac6f88a8848dba16.WebRole1_IN_0 is reporting state Ready.

[00000010] [08/26/2013 20:48:07.45] [INFO] Role e85c82ee2e5b48f7ac6f88a8848dba16.WebRole1_IN_0 has current state Started, desired state Started, and goal state execution status UpdateSucceeded.

[00000007] [08/26/2013 20:48:07.77] [HEART] WindowsAzureGuestAgent Heartbeat.

[00000007] [08/26/2013 20:48:07.77] [INFO] Role e85c82ee2e5b48f7ac6f88a8848dba16.WebRole1_IN_0 is reporting state Ready.

[00000010] [08/26/2013 20:48:12.47] [INFO] Role e85c82ee2e5b48f7ac6f88a8848dba16.WebRole1_IN_0 has current state Started, desired state Started, and goal state execution status UpdateSucceeded.

[00000009] [08/26/2013 20:48:12.80] [HEART] WindowsAzureGuestAgent Heartbeat.

[00000009] [08/26/2013 20:48:12.80] [INFO] Role e85c82ee2e5b48f7ac6f88a8848dba16.WebRole1_IN_0 is reporting state Ready.

[00000010] [08/26/2013 20:48:17.47] [INFO] Role e85c82ee2e5b48f7ac6f88a8848dba16.WebRole1_IN_0 has current state Started, desired state Started, and goal state execution status UpdateSucceeded.

[00000007] [08/26/2013 20:48:17.81] [HEART] WindowsAzureGuestAgent Heartbeat.

[00000007] [08/26/2013 20:48:17.81] [INFO] Role e85c82ee2e5b48f7ac6f88a8848dba16.WebRole1_IN_0 is reporting state Ready.

[00000010] [08/26/2013 20:48:22.48] [INFO] Role e85c82ee2e5b48f7ac6f88a8848dba16.WebRole1_IN_0 has current state Started, desired state Started, and goal state execution status UpdateSucceeded.

[00000009] [08/26/2013 20:48:22.83] [HEART] WindowsAzureGuestAgent Heartbeat.

[00000009] [08/26/2013 20:48:22.83] [INFO] Role e85c82ee2e5b48f7ac6f88a8848dba16.WebRole1_IN_0 is reporting state Ready.

Now we see a new Goal state being received, and the goal state is ‘Stopped’.

[00000009] [08/26/2013 20:48:22.84] [INFO] Goal state 3 received.

[00000009] [08/26/2013 20:48:22.84] [INFO] Received stop role deadline hint: 300000.

[00000009] [08/26/2013 20:48:22.84] [INFO] Machine goal state is Stopped. Stopping all roles.

The guest agent begins shutting down the VM.

[00000009] [08/26/2013 20:48:22.84] [INFO] Stopping container.

[00000009] [08/26/2013 20:48:22.84] [INFO] Execution for container 0db3c7b9-521a-4681-885c-dd363d797e61 is being stopped.

[00000010] [08/26/2013 20:48:22.84] [INFO] Role State Executor for e85c82ee2e5b48f7ac6f88a8848dba16.WebRole1_IN_0 is exiting.

[00000011] [08/26/2013 20:48:22.84] [INFO] Role State Inquirer for e85c82ee2e5b48f7ac6f88a8848dba16.WebRole1_IN_0 is exiting.

[00000009] [08/26/2013 20:48:44.21] [INFO] Container stopped.

[00000009] [08/26/2013 20:48:44.24] [INFO] Initiating system shutdown.

[00000009] [08/26/2013 20:48:44.26] [INFO] Agent Runtime shutdown.

[00000005] [08/26/2013 20:48:44.69] [INFO] WindowsAzureGuestAgent stopping.

[00000005] [08/26/2013 20:48:44.69] [INFO] Stopping state driver.

[00000005] [08/26/2013 20:48:44.69] [INFO] State driver stopped.

[00000005] [08/26/2013 20:48:44.69] [INFO] Un-initializing Container State Machine.

[00000005] [08/26/2013 20:48:44.69] [INFO] Container already stopped.

[00000005] [08/26/2013 20:48:44.69] [INFO] Agent runtime already uninitialized.

[00000005] [08/26/2013 20:48:44.69] [INFO] Clearing Deployment Management URIs, if set.

[00000005] [08/26/2013 20:48:44.69] [INFO] Container State Machine uninitialized.

[00000009] [08/26/2013 20:48:44.69] [INFO] WindowsAzureGuestAgent stopped successfully.

And about a minute later the guest agent is starting up again.

[00000005] [08/26/2013 20:49:15.00] [INFO] WindowsAzureGuestAgent starting. Version 2.1.0.0

The System Event Logs also contain an entry at that time saying the Azure guest agent is initiating a system shut down:

The process D:\Packages\GuestAgent\GuestAgent\WindowsAzureGuestAgent.exe (RD00155D66C9DA) has initiated the shutdown of computer RD00155D66C9DA on behalf of user NT AUTHORITY\SYSTEM for the following reason: Legacy API shutdown

Reason Code: 0x80070000

Shutdown Type: shutdown

Comment:

Why is Azure shutting down the VM? Get a more in depth look from Role Instance Restarts Due to OS Upgrades, along with some tips that would have helped you avoid this service interruption to begin with.