Precompiled Header Files in Visual Studio 2010

Hello, this is Andy Rich from the Visual C++ front-end team. Today, I’ll be discussing the use of precompiled header files (aka PCH files) in our new intellisense architecture.

Back in May, Boris briefly mentioned an intellisense optimization based on precompiled header technology. This post will elaborate on that comment by providing a glimpse into how Intellisense PCH files (or iPCH files) work. We’ve all become accustomed to precompiled headers improving build throughput, and now in Visual Studio 2010, we use the same technology to improve intellisense performance in the IDE.

The Problem with “Pre-parses”

The VC++ 2010 intellisense engine mimics the command-line compiler by using a translation unit (TU) model to service intellisense requests. A typical translation unit consists of a single source file and the several header files included by that source file (and the headers those headers include, etc.). The intellisense engine exists to provide users with answers to questions, such as what a particular type is, what the exact signature of a function is (and its overloads), or what variables are available in the current scope beginning with a particular substring.

In order for the intellisense compiler to provide this information, the intellisense engine must initially parse the TU like the command-line compiler, recursively parsing all #include files listed at the top of the source file before parsing the rest of the source file. Thanks to C++ scoping rules, we know that we can skip all method bodies except the one you might currently be in, but, other than this optimization, the rest of the translation unit must be parsed to give an accurate answer. We refer to this as the “pre-parse.”

Pre-parses are not always required, as users spend much of their time writing code inside of a local scope. Through careful tracking of user edits, we can say whether or not the user has changed information which requires a new pre-parse. When this happens, we throw away our old pre-parse and start again.

So, even though you aren’t editing header files, they must be continually parsed as part of the pre-parse. As a translation unit grows in size, these parses require progressively more CPU and memory resources, and will lead to a drop in intellisense performance. Parsing is slow, and parsing a lot of information (as in a complex translation unit) can be very slow; 3 seconds is not uncommon, and that is simply too long for an intellisense response.

The PCH Model

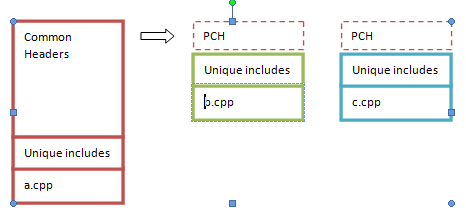

Luckily, there is an optimization developed for command-line compilers that can also be applied to the intellisense compiler: pre-compiled headers (PCHs). The PCH model presupposes that your translation units mostly share a lot of the same common includes. You inform the compiler of this set of headers, and it builds a pre-compiled header file. On subsequent compilations, instead of re-compiling this set of headers, the compiler loads the PCH file and then proceeds to compile the unique portion of your translation unit.

|

Common Headers |

|

a.cpp |

|

PCH |

|

PCH |

|

Unique includes |

|

b.cpp |

|

Unique includes |

|

c.cpp |

|

Unique includes |

There are a few caveats to this model. First, the “common” portion of your headers must be the first files compiled in each translation unit, and they must be in the same order in all translation units. Most developers refactor their headers to have a common header file for this purpose; this is what stdafx.h in the Visual C++ project templates is intended for.

Leveraging PCH for the Intellisense Compiler

In general, if you have PCH set up for use with the build compiler, the intellisense compiler is able to pick up those PCH options and generate a PCH that can be used. Because the intellisense compiler uses a different PCH format from the build compiler, separate PCH files are created for the use of the intellisense compiler. These files are typically stored under your main solution directory, in a subdirectory labeled ‘ipch’. (Future releases may have the command-line and intellisense compilers share these PCHs, but for now, they are separate.)

The intellisense compiler can load these iPCH files to save not only parse time, but memory as well: all translation units that share a common PCH will share the memory for the loaded PCH, further reducing the working set. So, a properly set-up PCH scheme for your solution can make your intellisense requests execute much more rapidly and reduce memory consumption.

Here are some important things to keep in mind when configuring your project for iPCH:

· iPCH and build compiler PCH share the same configuration settings (configurable on a per-project or per-file basis through “Configuration Properties->C/C++->Precompiled Headers”).

· The iPCH should represent the largest set of common headers possible, except for commonly edited headers.

· All translation units should include the common headers in the same order. This may be best configured through a single header file that includes all the other headers, with respective .cpp files including only this “master”header file.

· You can have different iPCH files for different translation units – but only one iPCH file can be used for any given translation unit.

· The intellisense compiler will not create a iPCH file if there are errors in it – open up the ‘error list’ window and look for any intellisense errors; eliminate these errors in order to get PCH working.

· If you feel that an iPCH has somehow become corrupted, you can shut down the IDE and delete the iPCH directory.

Light

Light Dark

Dark

0 comments