Linker Enhancements in Visual Studio 2013 Update 2 CTP2

For developer scenarios, linking takes the lion’s share of the application’s build time. From our investigation we know that the Visual C++ linker spends a large fraction of its time in preparing, merging and finally writing out debug information. This is especially true for non-Whole Program Optimization scenarios.

In Visual Studio 2013 Update 2 CTP2, we have added a set of features which help improve link time significantly as measured by products we build here in our labs (AAA Games and Open source projects such as Chromium):

- Remove unreferenced data and functions (/Zc:inline). This can help all of your projects.

- Reduce time spent generating PDB files. This applies mostly to binaries with medium to large amounts of debug information.

- Parallelize code-generation and optimization build phase (/cgthreads). This applies to medium to large binaries generated through LTCG.

Not all of these features are enabled by default. Keep reading for more details.

Remove unreferenced data and functions (/Zc:inline)

As a part of our analysis we found that we were un-necessarily bloating the size of object files as a result of emitting symbol information even for unreferenced functions and data. This as a result would cause additional and useless input to the linker which would eventually be thrown away as a result of linker optimizations.

Applying /Zc:inline on the compiler command line would result in the compiler performing these optimizations and as a result producing less input for the linker, improving end to end linker throughput.

New Compiler Switch: /Zc: inline[-] – remove unreferenced function or data if it is COMDAT or has internal linkage only (off by default)

Throughput Impact: Significant (double-digit (%) link improvements seen when building products like Chromium)

Breaking Change: Yes (possibly) for non-conformant code (with the C++11 standard), turning on this feature could mean in some cases you see an unresolved external symbol error as shown below but the workaround is very simple. Take a look at the example below:

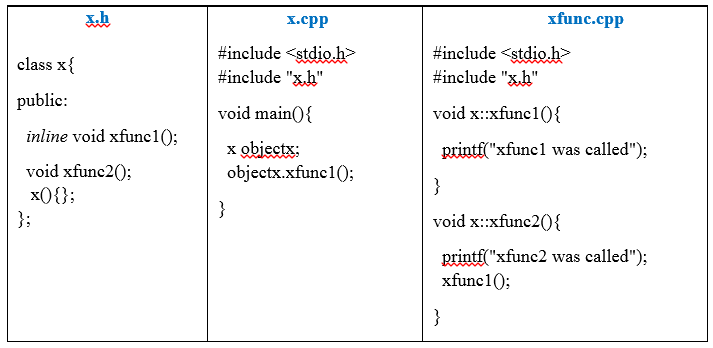

If you are using VS2013 RTM, this sample program will compile (cl /O2 x.cpp xfunc.cpp) and link successfully. However, if you compile and link with VS2013 Update 2 CTP2 with /Zc:inline enabled (cl /O2 /Zc:inline x.cpp xfunc.cpp), the sample will choke and produce the following error message:

xfunc.obj : error LNK2019: unresolved external symbol "public: void __thiscall x::xfunc1(void)"

(?xfunc1@x@@QAEXXZ) referenced in function _main x.exe : fatal error LNK1120: 1 unresolved externals

There are three ways to fix this problem.

- Remove the ‘inline’ keyword from the declaration of function ‘xfunc’.

- Move the definition of function ‘xfunc’ into the header file “x.h”.

- Simply include “x.cpp” in xfunc.cpp.

Applicability: All but LTCG/WPO and some (debug) scenarios should see significant speed up.

Reduce time spent generating PDB files

This feature is about improving type merging speed significantly by increasing the size of our internal data structures (hash-tables and such). For larger PDB’s this will increase the size at most by a few MB but can reduce link times significantly. Today, this feature is enabled by default.

Throughput Impact: Significant (double-digit(%) link improvements for AAA games)

Breaking Change: No

Applicability: All but LTCG/WPO scenarios should see significant speed up.

Parallelize code-generation and optimization build phase (/cgthreads)

The feature parallelizes (through multiple threads) the code-generation and optimization phase of the compilation process. By default today, we use four threads for the codegen and Optimization phase. With machines getting more resourceful (CPU, IO etc.) having a few extra build threads can’t hurt. This feature is especially useful and effective when performing a Whole Program Optimization (WPO) build.

There are already multiple levels of parallelism that can be specified for building an artifact. The /m or /maxcpucount specifies the number of msbuild.exe processes that can be run in parallel. Where, as the /MP or Multiple Processes compiler flag specifies the number of cl.exe processes that can simultaneously compile the source files.

The /cgthreads flag adds another level of parallelism, where it specifies the number of threads used for the code generation and optimization phase for each individual cl.exe process. If /cgthreads, /MP and /m are all set too high it is quite possible to bring down the build system to its knees making it unusable, so use with caution!

New Compiler Switch: /cgthreadsN, where N is the number of threads used for optimization and code generation. ‘N’ represents the number of threads and ‘N’ can be specified between [1-8].

Breaking Change: No, but this switch is currently not supported but we are considering making it a supported feature so your feedback is important!

Applicability: This should make a definite impact for Whole Program Optimization scenarios.

Wrapping Up

This blog should give you an overview on a set of features we have enabled in the latest CTP which should help improve link throughput. Our current focus has been to look at slightly larger projects currently and as a result these wins should be most noticeable for larger projects such as Chrome and others.

Please give them a shot and let us know how it works out for your application. It would be great if you folks can post before/after numbers on linker throughput when trying out these features.

If you are link times are still painfully slow please email me, Ankit, at aasthan@microsoft.com. We would love to know more!

Thanks to C++ MVP Bruce Dawson, Chromium developers and the Kinect Sports Rivals team for validating that our changes had a positive impact in real-world scenarios.

Light

Light Dark

Dark

0 comments