Tuning C++ build parallelism in VS2010

A great way to get fast builds on a multiprocessor computer is to take advantage of as much parallelism in your build as possible. If you have C++ projects, there’s two different kinds of parallelism you can configure.

What are the dials I can set?

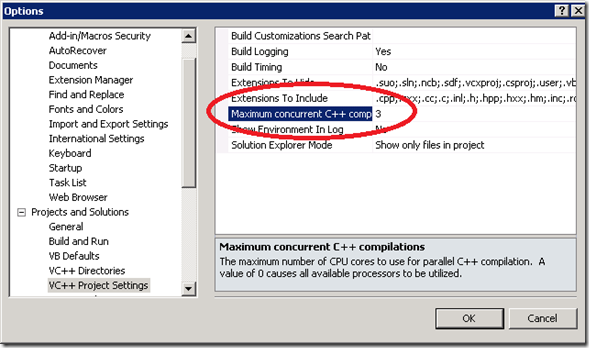

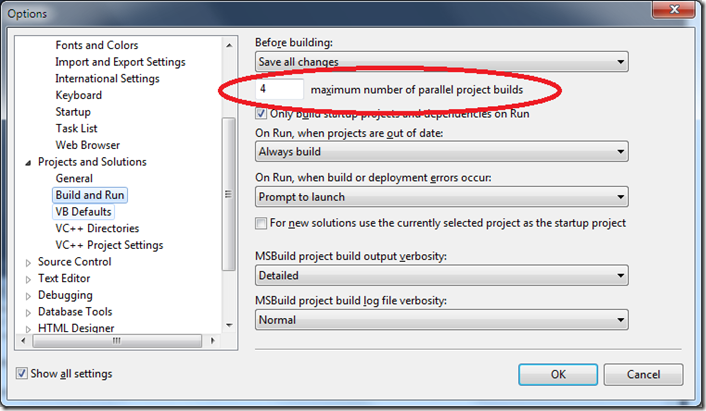

Project-level parallel build, which is controlled by MSBuild, is set at the solution level in Visual Studio. (Visual Studio actually stores the value per-user per-computer, which may not be always what you want – you may want to have different values for different solutions, and the UI doesn’t allow you to do that.). By default Visual Studio picks the number of processors on your machine. Do some experiments with slightly higher and lower numbers to see what gives the best speed for your particular code. Some people like to dial it down a little so that they can do other work while a build goes on.

This dial is just the same as VS2008, although under the covers MSBuild is taking over some of the work from Visual Studio now.

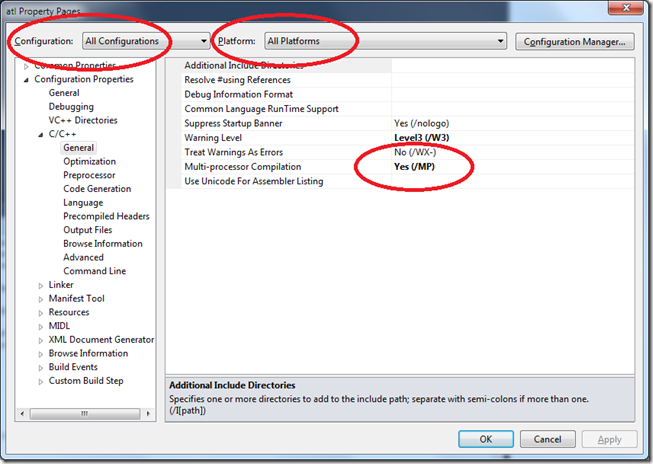

If you’re building C++ or C++/CLI, there’s another place you can get build parallelism. The CL compiler supports the /MP switch, which tells it to build subsets of its inputs concurrently with separate instances of itself. The default number of buckets, again, is the number of CPU’s, but you can also specify a number, like /MP5. Again, this was available before, so I’m going to just remind you where the value is and what it looks like in the MSBuild format project file.

Go to your project’s property pages, and to the C/C++, General page. For now I suggest that you select All Configurations and All Platforms. You can be more selective later if you want.

As usual you can see what’s in the project file by unloading it, right clicking on the node in the Solution Explorer, and choosing Edit:

Here’s what it looks like in the project file. Yes, it’s inside a configuration and platform specific block, but it put the same value in all of them.

Notice that it’s in an “ItemDefinitionGroup”. That MSBuild tag simply indicates it defines a “template” for items of a particular type. In this case, all items of type “ClCompile” will automatically have metadata MultiProcessorCompilation with value true unless they explicitly choose a different value.

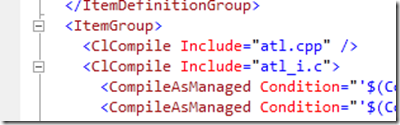

By the way, MSBuild Items, in case you’re wondering, are just files, usually. Their subelements, if any, are the metadata. Here’s what some look like. Notice they’re in an “ItemGroup”:

Because this is metadata, at an extreme, I could actually set this down to a per-file basis. In that case, MSBuild would bucket together all the inputs that have a common value. You would need to disable /MP for particular files that use #import, for example, because that’s not supported with /MP. (Other features not supported with /MP are /Gm, which is incremental compilation, and a few other switches documented here)

Note it’s under the “ItemGroup” because these are actual items:

Back to multiprocessor CL. If you want to tell CL explicitly how many parallel compiles to do, Visual Studio lets you do this – as for /MP, it’s exposed as a global setting:

Under the covers, VS passes this on by setting a property (a global property – it’s not persisted) named CL_MPCount. That means it won’t have any effect when building outside of VS.

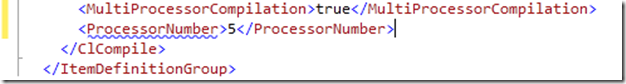

If you want to choose a value at a finer grained level you can’t use the UI as it’s not exposed in the property pages or the command line preview. You have to go into the project file editor and type it. It’s a different piece of metadata on the CLCompile items, named “ProcessorNumber”. It can have a number from 1 to as high as you like and adds the numeric value to /MP if you want it. If you don’t have <MultiProcessorCompilation> it will be ignored.

The squiggle here is a minor bug – ignore it.

What about building on the command line?

The /MP settings come from the project files, so they work exactly the same on the command line. That’s part of the whole point of MSBuild, right, the same build on the command line as in Visual Studio? But the global parallelism setting that you set in Tools, Options does not affect the command line. You must pass it yourself to the msbuild.exe command with the /m switch. Again, the value is optional and if you don’t supply a value it uses the number of CPU’s. However, unlike Visual Studio, out of the box, without /m supplied, it uses 1 CPU. That might change in future.

To choose the number on any /MP value, you can set an environment variable, or pass a property, named CL_MPCount, just like Visual Studio does.

Setting /MP on every project is tiresome, what are my options?

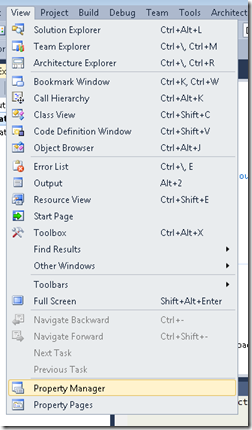

Probably you’ll want to use /MP on more than one of your projects, and you don’t want to edit each individually. The Visual Studio solution to this kind of problem is property sheets. They don’t have any special connection to multiprocessor build, but it’s an opportunity for me to give a quick refresher using this as an example. First open the “Property Manager” from the view menu. Its exact location will vary depending on the settings you’re using, here’s where it is if you have C++ settings;

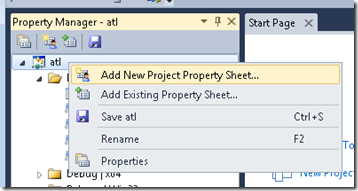

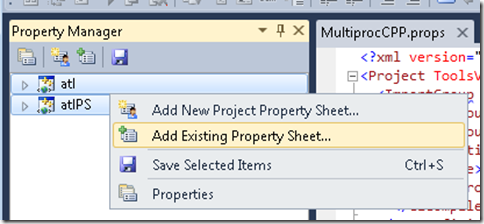

Right click on a project and choose “Add New Property Sheet”:

I have mine the name “MultiprocCpp.props”. You’ll see it gets added to all configurations of this project. Right click on it, and you’ll see the same property pages that the project has, but this time you’re editing the property sheet. Again, set “Multi-processor Compilation” to “Yes”. Close the property pages, select the property sheet in the Property Manager, and hit Save.

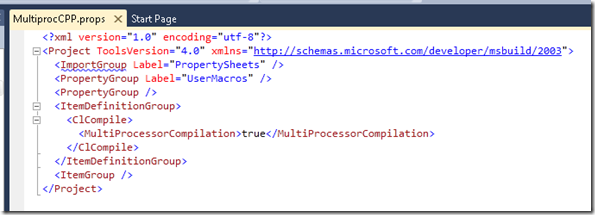

Now I can open up that new MultiprocCpp.props file in the editor, and I see this:

(Again, ignore the squiggle.)

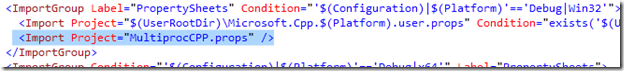

Looking in the project file, you can see the property sheet pulled in to each configuration, using an “Import” tag. Think of that just like a #include in C++:

So now we have the definition we put in the project file before, but in a reusable form. Given that, I can put it into all the projects I want in one shot, by multi-selecting in the Property Manager and choosing Add Existing Property Sheet:

Now all your projects compile with /MP !

In some circumstances, you might want to go beyond what you can easily do in the Property Manager. For example you might want to bulk-remove a property sheet, or put a property sheet in each project once outside of all the configurations. Fortunately MSBuild 4.0 has a powerful and complete object model over its files that you can use to do this kind of work in a few lines of code. More on that in a future blog post, but for now, if you want to take a look, point the Object Browser at Microsoft.Build.dll.

Before I leave property sheets, it’s worth mentioning that you can do this kind of common-importing in your own ways, if you don’t mind losing some of the UI support. For example, in the build of VS itself, we pull in a common set of properties at the top of every project, like this example from the project that builds msenv.dll (which contains much of the VS shell)

Within that we define all kinds of global settings, and import yet others. I’ll talk about this kind of structure in a future blog post about the organization of large build trees.

Too much of a good thing

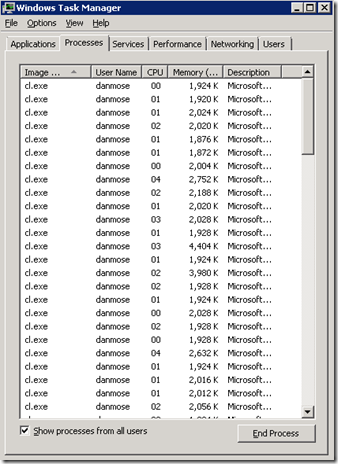

Usually the problem is getting enough parallelism to exploit all your machine’s cores. But the reverse problem is possible, and although it’s a nice problem to have, it needs fixing because it will cause your machine to thrash. Here’s what task manager might look like when this is happening:

In this case on a box with 8 CPU’s I enabled /MP on all my projects in the solution, and then built it with msbuild.exe /m (I didn’t need to use the command line to have this problem, the same could happen in Visual Studio). If dependencies don’t prevent it, MSBuild will kick off 8 projects at once, and in each of those CL will run 8 instances of itself at once, so we could have up to 64 copies of CL all fighting over my cores and my disk. Not a recipe for performance.

You can expect that one day the system will auto-tune itself here, but for now if you have this problem you would do some manual adjustment. Here’s some ideas:

Dial down the values globally

Reduce /m:4 to /m:3, for example, or use a property sheet to change /MP to /MP2, say. Easy, but a blunt instrument: if there are points elsewhere in your build where there is a lot of project parallelism but not much CL parallelism, or vice versa, you probably just slowed them down.

Tune /MP for each project and configuration

A project that compiles at a relatively parallelized point in the build is not such a good candidate for /MP, for example. You might adjust by configuration as well. Retail configuration can be much slower to build because the compiler’s optimizing more: that might make it interesting to enable /MP for Retail and not Debug.

Get super custom

In your team, you might have a range of hardware. Perhaps your developers have 2-CPU machines, but your nightly build is on an 8-CPU beast. Yet the both need to build the same set of sources, and you don’t want any box to be either slow or thrashing. In this case, you could use environment variables, and Conditions on the MSBuild tags. Almost all MSBuild tags can have Conditions.

Here’s an example below. When a property “MultiprocCLCount” (which I just invented) has a value, and it’s greater than 0, /MP is enabled with that value.

MSBuild pulls in all environment variables as its initial properties when it starts up. So on my fast machine, I set an environment variable MultiprocCLCount=8, and on my developer boxes, I set MultiprocCLCount=2.

The build machine’s script could also parameterize the /m switch going to MSBuild.exe, like /m:%MultiprocMSBuildCount%

To other properties that might be useful in exotic conditions: $(Number_Of_Processors) is the number of logical cores on the box – this just comes from the environment variable. $(MSBuildNodeCount) is the value that was passed to /m on msbuild.exe, or within VS, the value from Tools>Options for project parallelism.

That’s it. I hope while walking you through /m and /MP I’ve also given you an overview of some MSBuild features and how much flexibility they give you to configure your build process.

Optimizing your build speed is a huge topic so look for more blogging on this subject from me.

Dan – Developer Lead, MSBuild

Light

Light Dark

Dark

0 comments